Author: nickvenden

MidJourney images for reference

The Stream – a Sonnet and its Music in an Immersive Experience

As a submission to Siggraph 2021-Online Art Gallery I created a new immersive experience. “The Stream” is a sonnet by Don Paterson from his book, Orpheus – A Version of Rilke (note 1).

______________________________________________

A NOTE about the this low-res video: In VR and 4K video, pointclouds are crisp bits of color-vertex light. The constant flickering and smearing artifacts here are products of Siggraph’s 300MB file size limit. Also, several of the images below are frame-grabs.

___________________________________________________

ABOUT THE PROCESS

Created in covid-19 isolation, by myself, The Stream felt like “writing from the underworld of nightmarish times, to summon up the beautiful,” as a critic of the Don Paterson put it. The first word of the poem is “God,” so the reader-viewer senses what’s ahead. Although Paterson takes an ambivalent even cynical view of a spiritual life, at the last three lines there is a volta, a turning to hope: “We pray to keep it near us, as the lamb / might beg the shepherd for its bell, / from its quietest instinct.” Paterson has a wonderful ear, a singing voice, and clear images. Perfect for music within an immersive experience.

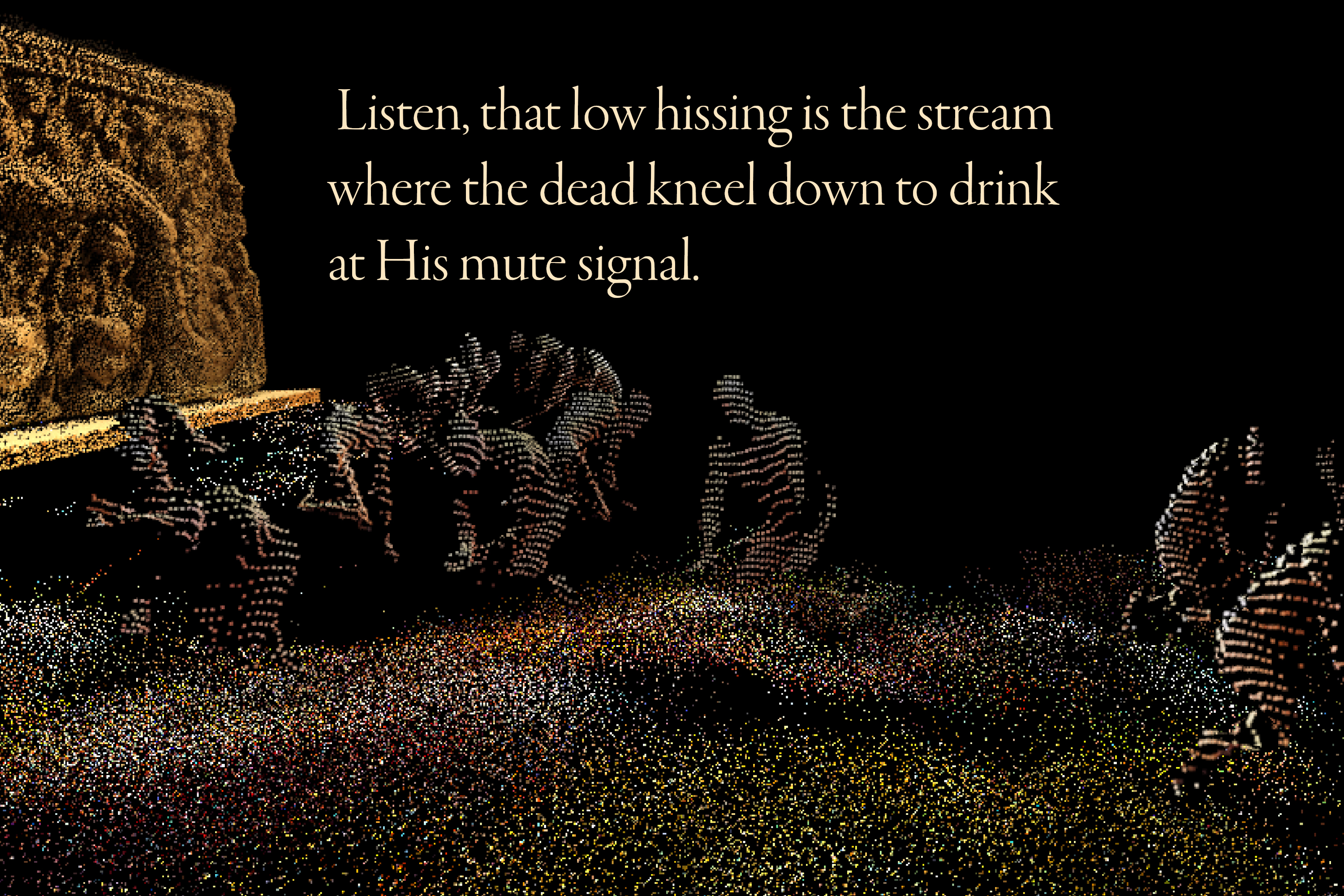

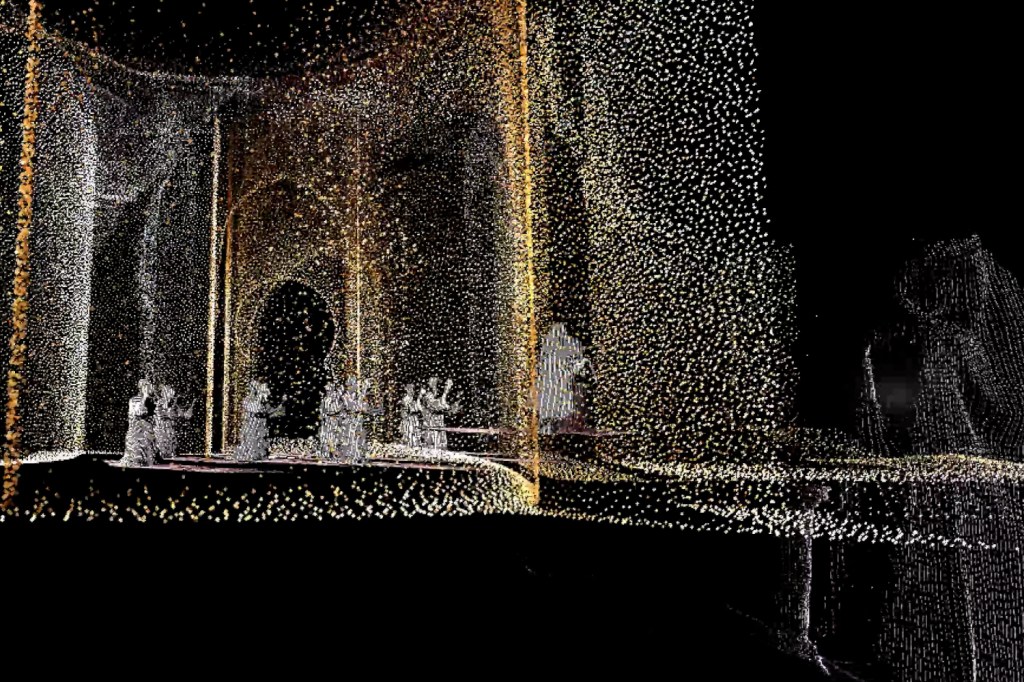

Nearly every line of the sonnet inspired a new ‘scene’ constructed using Lidar scans and photogrammetric reconstructions. These very brief scenes can be explored in 6-DOF (degrees of freedom) VR as the volumetric video-captures play at human scale. Using the DepthKit plugin and Unity’s own Timeline and CineMachine, I was able to synchronize the volumetric video clips to the music with frame-accuracy.

I composed the music, sang, played the piano, created the volumetric video using myself for the 3D models, and constructed everything for VR in Unity3D—by myself. It was covid. There was no one to help.

Why create with pointclouds? They’ve been so misused ever since “Notes on Blindness” (2). Although I’m only a cut-and-paste programmer, I wanted to explore the beautiful effects that HLSL compute-shaders can create with large pointclouds. My starting point was the scripts of the Unity guru Keijiro Takahashi (3).

The list of the softwares/languages used include: Unity3D, DeptKit Pro and its Unity plugin, Cloud Compare, MeshLab, Unity Timeline/CineMachine, C#, HLSL for shaders, and more.

I won’t interpret the poem’s meaning because this immersive experience is just that: a visual exegesis of the poem. But I’ll offer one example: On the line, “He accepts even our purest gifts,” I created a robed woman who presents a gift at the feet of Gabriel. It’s a mise en abyme, a Droste Effect, a picture recursively appearing within itself. She offers herself as her purest gift. Recursively. Infinitely. The poet continues: “[God] with the same indifference and stony calm, / standing motionless to face the rift our each enquiry opens into his realm.” We can only offer.

_____________________________________________________

NOTES

(1) The poem is used with permission of the author’s agent Peter Straus, Peters@rcwlitagency.com.

(2) Notes On Blindness : Into Darkness, an immersive virtual reality VR project for John M. Hull1935-2015. Sundance 2016.

(3) https://github.com/keijiro

_____________________________________________________

THE FULL TEXT

Here is the text as set to music, taken from an earlier version published as “Stream” in Poetry Magazine https://www.poetryfoundation.org/poetrymagazine/browse?contentId=42217

God is the place that always heals over,

however often we tear at it. We are all so

jagged, forever having to know,

but too great to show his favour or disfavour

He accepts even the purest of our gifts

with the same indifference and stony calm

standing motionless to face the rift

our each inquiry opens in his realm.

Listen: that low hissing is the stream

where the dead kneel down to drink

at his mute signal.

We pray to keep it near us, as the lamb

might beg the shepherd for its bell,

from its quietest instinct.

___________________________________________

THE VOLUMETRIC VIDEO CAPTURE

I shot all the Azure Kinect volumetric video captures by myself in a large church meeting hall. The white fabric, for cassock, robes, dresses, worked best for the camera. You can see the Kinect depth camera on a simple monopod with pistol grip just behind the laptop. On the table-right is the Oculus Rift. Significantly, I was able to port my DepthKit video captures immediately into the DK Unity plugin and view them in 360 with the Oculus Rift. Immediate feedback.

I discovered that the DK textures were not convincing, even distracting, so I got rid of the green screen and used black duvetene (velvet drape) on the floor of a larger open area; the cutoff of the depth camera took care of removing the background. However the black duvetene, rather than disappear, showed up gray-purple in the capture. Nice, actually.

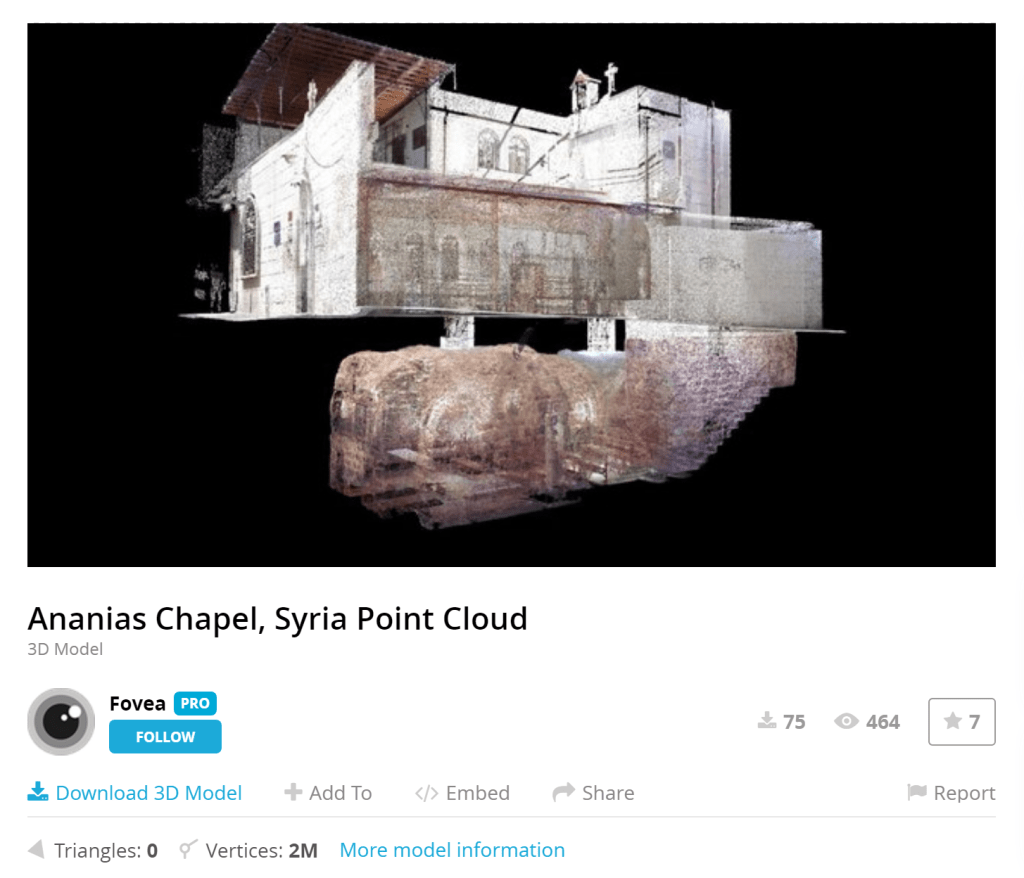

The loss of textures dictated a world that should be built from pointclouds, and color-vertex fields. Searching SketchFab for “pointcloud, Lidar, scan, photogrammetry, etc.” brought me some great things. The textured photogrammetry objects/props had to be treated in CloudCompare or MeshLab to strip off the textures, crop, etc., then imported into Unity as a .ply format object. Unity3D may now recognize other formats than .ply.

I had to model very little, but used CC-licensed objects instead to create sparse scenes inspired by the lyrics.

_________________________________________________

A PARTIAL LIST OF THE CREATIVE COMMONS LICENSED ASSETS USED

ANANIAS CHAPEL

LiDAR – Terrestrial . Collected by Directorate General of Antiquities and Museums . Distributed by Open Heritage 3D. License:

CC Attribution-NonCommercial-ShareAlikeCC Attribution-NonCommercial-ShareAlike

______________________________

PUERTA DE TOLEDO

https://sketchfab.com/3d-models/point-cloud-puerta-de-toledo-spain-97998d1a1f3d4d2387e472eacd28ae39

License:

CC AttributionCreative Commons Attribution

NOTE: All assets used were free or purchased and licensed under CC. I’ll update this list so I can credit the artists and technicians.